RESTai

AIaaS (AI as a Service) — Create AI projects and consume them via a simple REST API. RAG, Agents, Visual Logic, Inference — all in one platform.

AIaaS (AI as a Service) — Create AI projects and consume them via a simple REST API. RAG, Agents, Visual Logic, Inference — all in one platform.

A complete AI platform — not just another wrapper. Full Web UI, analytics, security, and enterprise features out of the box.

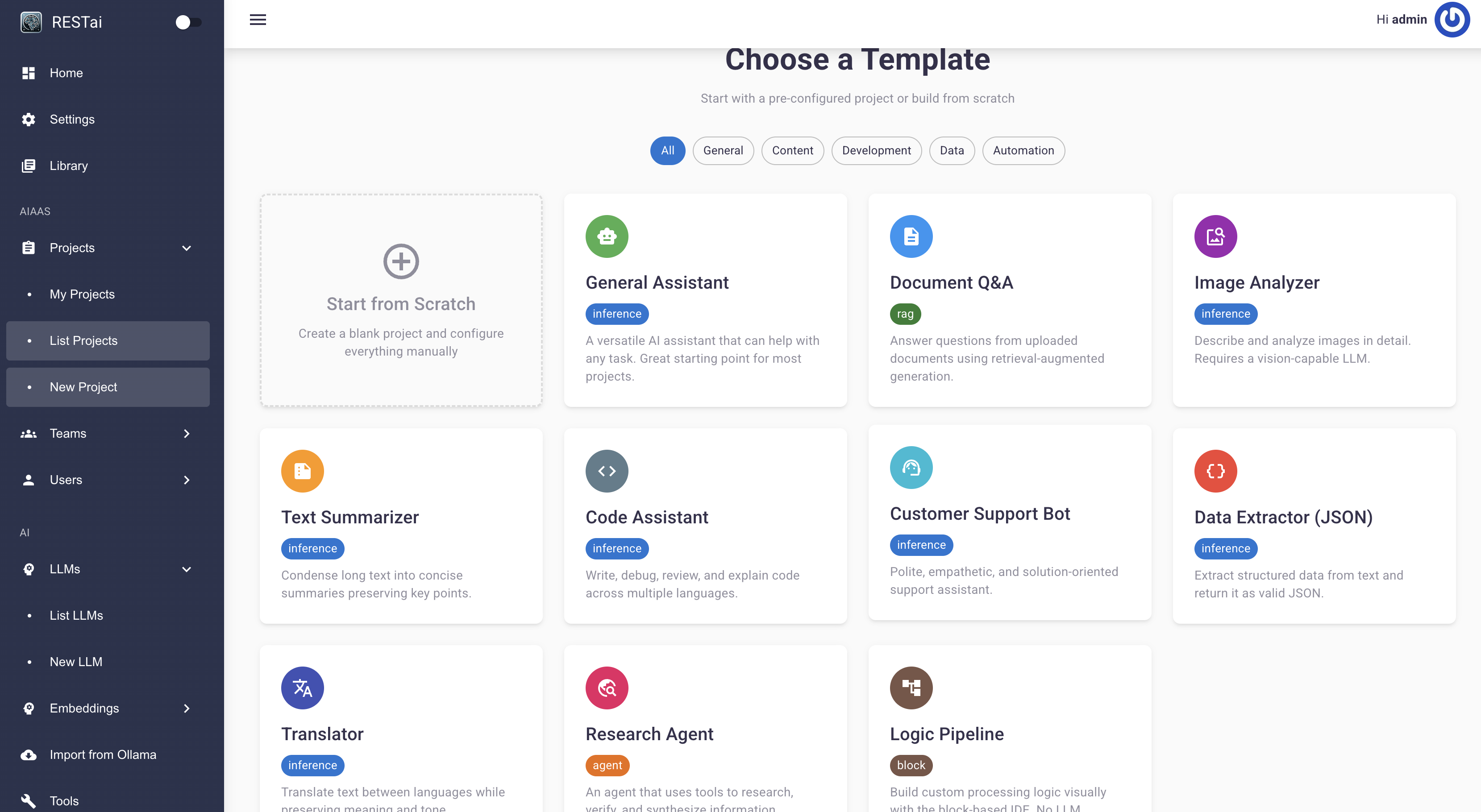

RAG with SQL-to-NL and auto-sync, Agents with MCP tools, Block visual logic, and direct Inference — all project types in one platform.

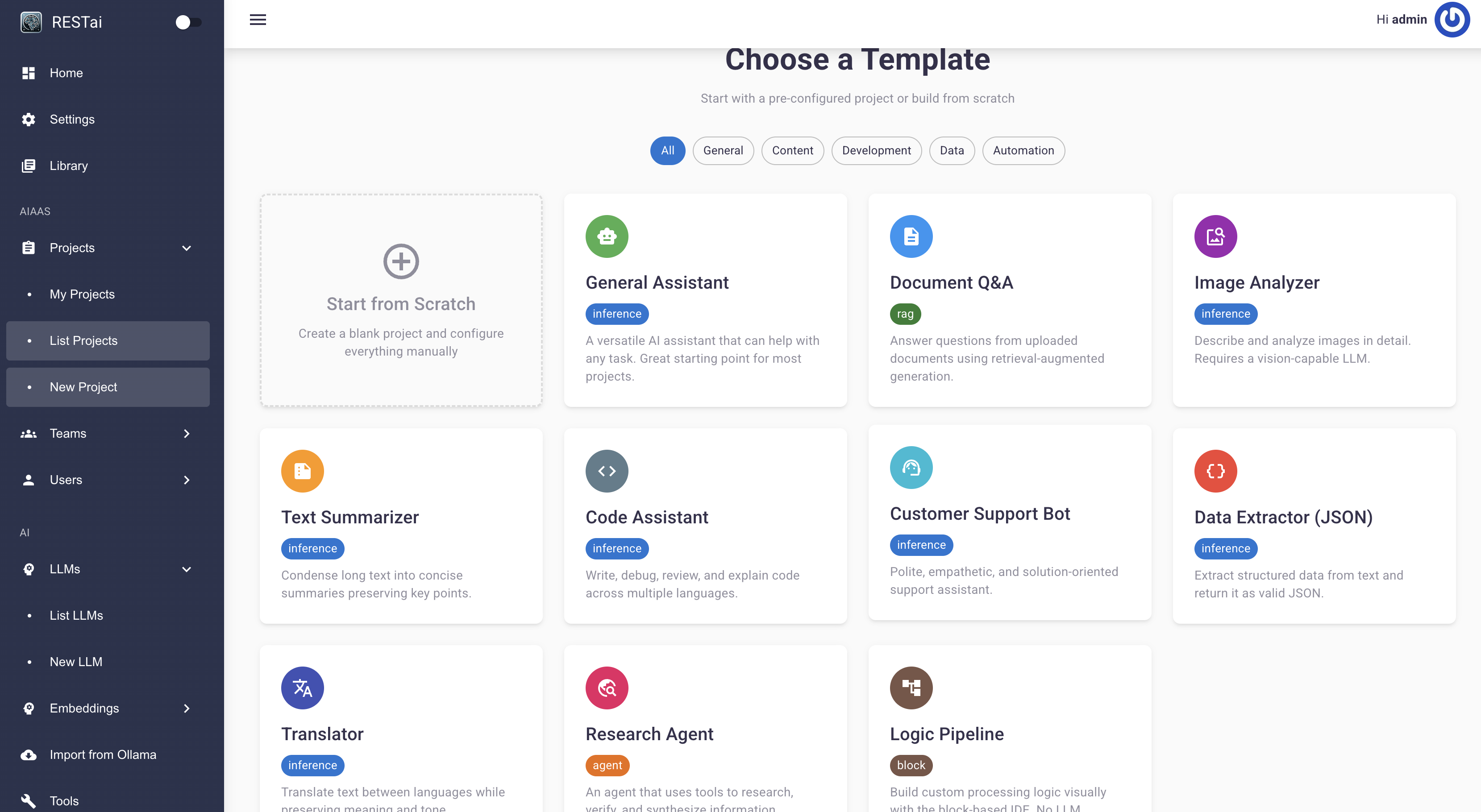

React dashboard with token tracking, cost analytics, latency monitoring, and per-project usage charts. Not just an API.

OpenAI, Anthropic, Ollama, Gemini, Groq, LiteLLM, vLLM, Azure, and any OpenAI-compatible endpoint.

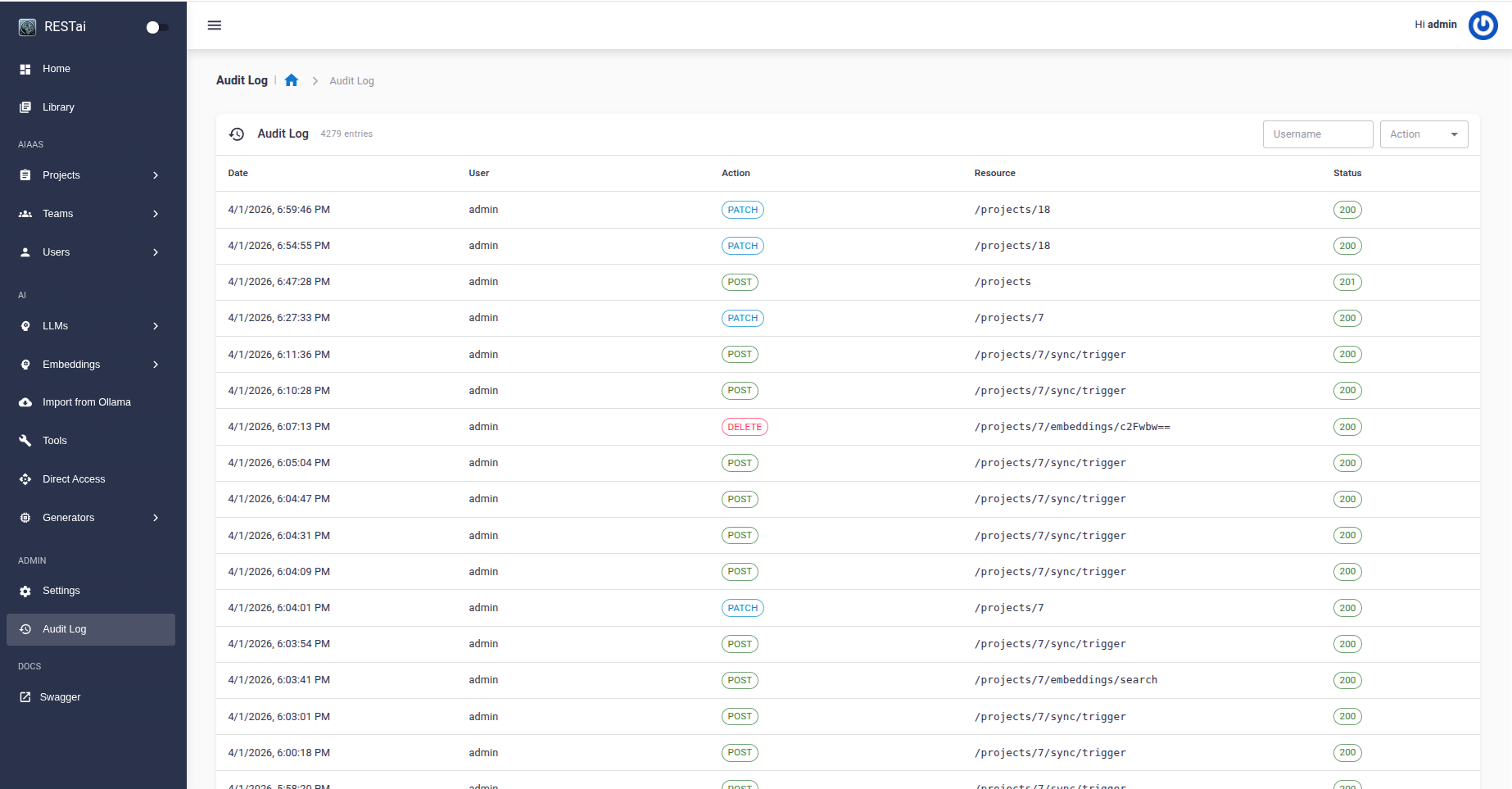

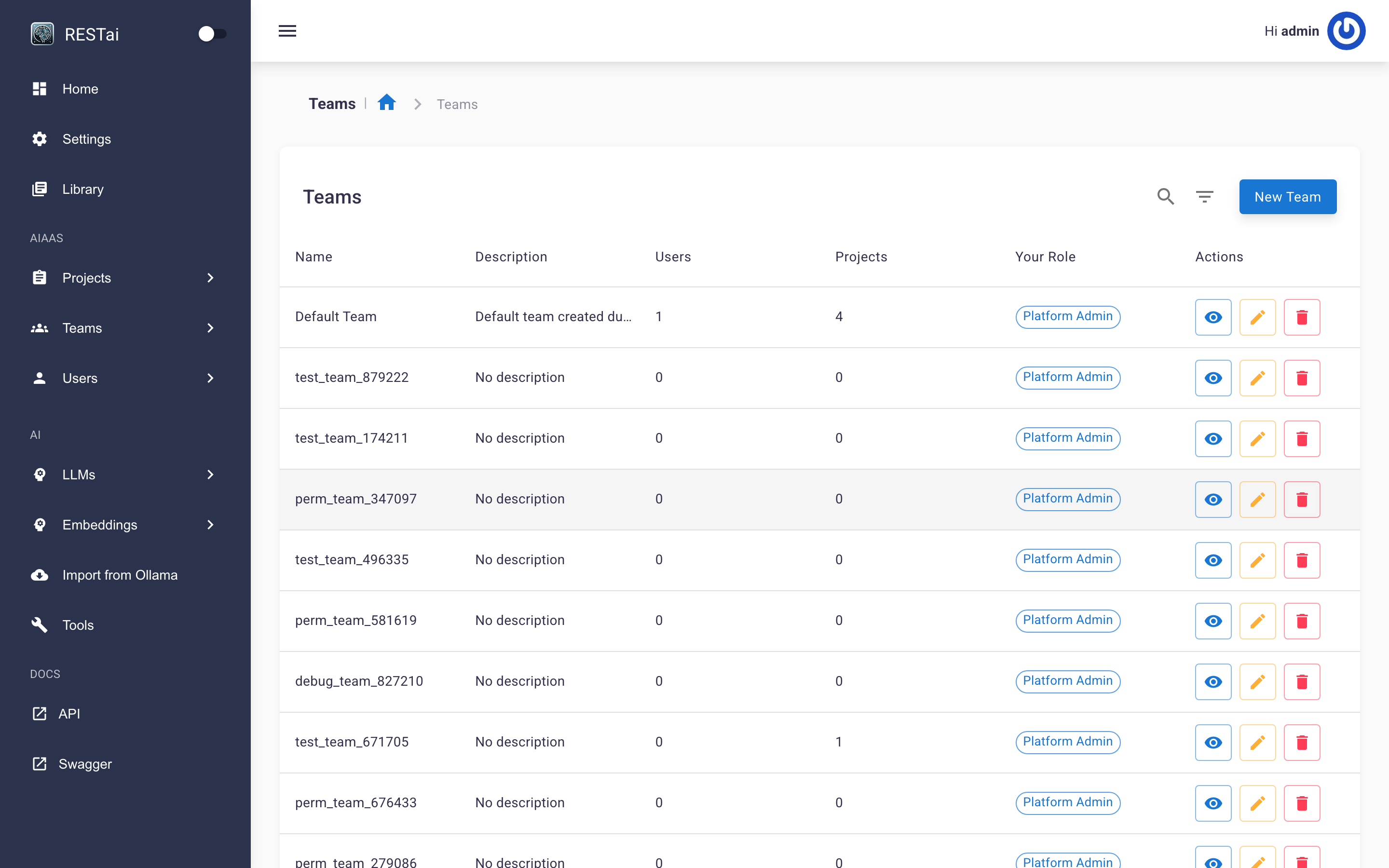

Teams, RBAC, OAuth/LDAP/OIDC, TOTP 2FA, input/output guardrails, audit logging, and per-project rate limiting.

Model Context Protocol support for unlimited agent integrations. Connect any MCP server via HTTP/SSE or stdio.

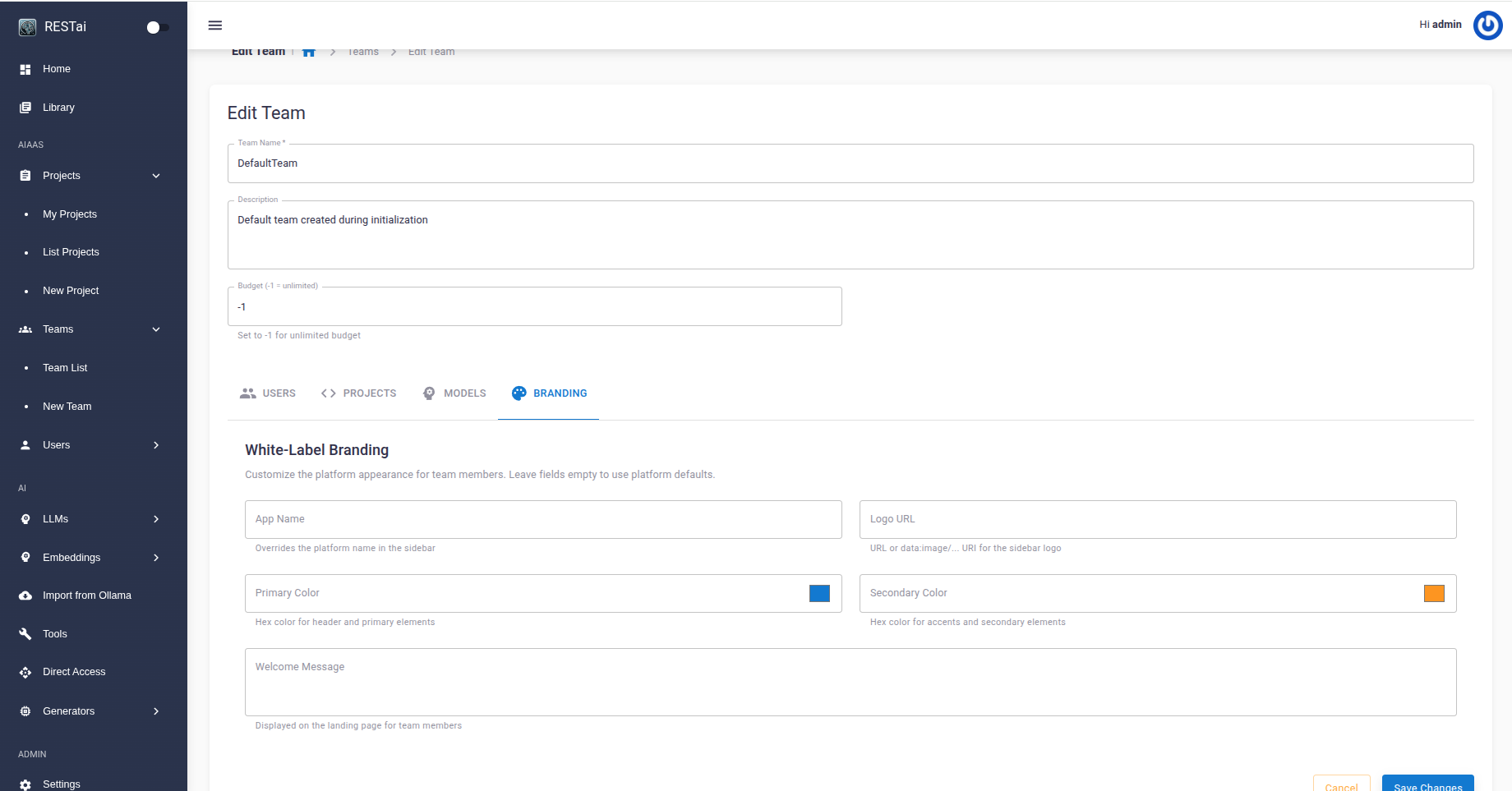

Custom logos, colors, and app names per team. Built-in knowledge sync from S3, Confluence, SharePoint, and Google Drive.

Track token usage, costs, latency, and project activity from a centralized dashboard. Daily charts for tokens, costs, and response latency per project — identify performance regressions at a glance.

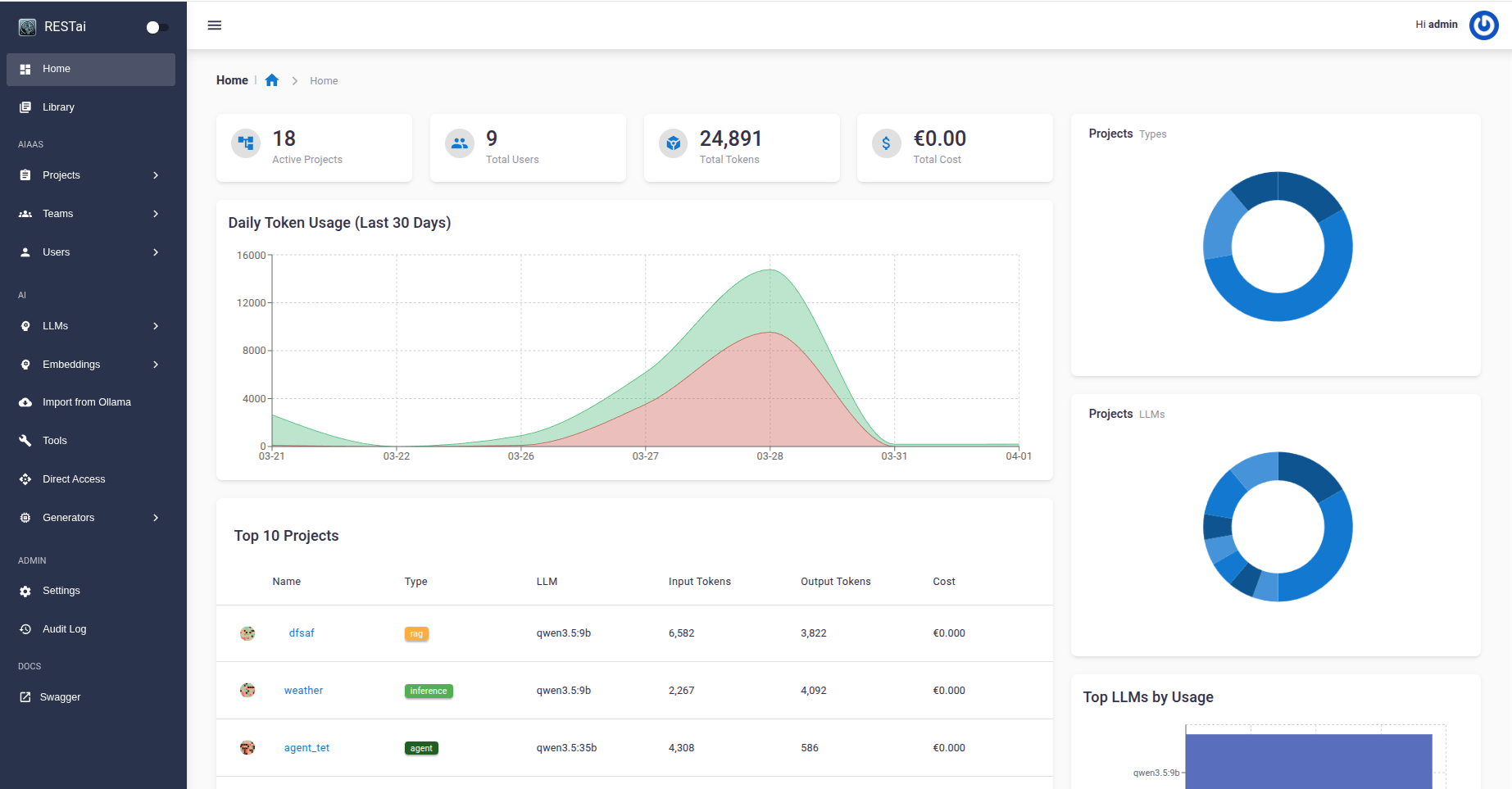

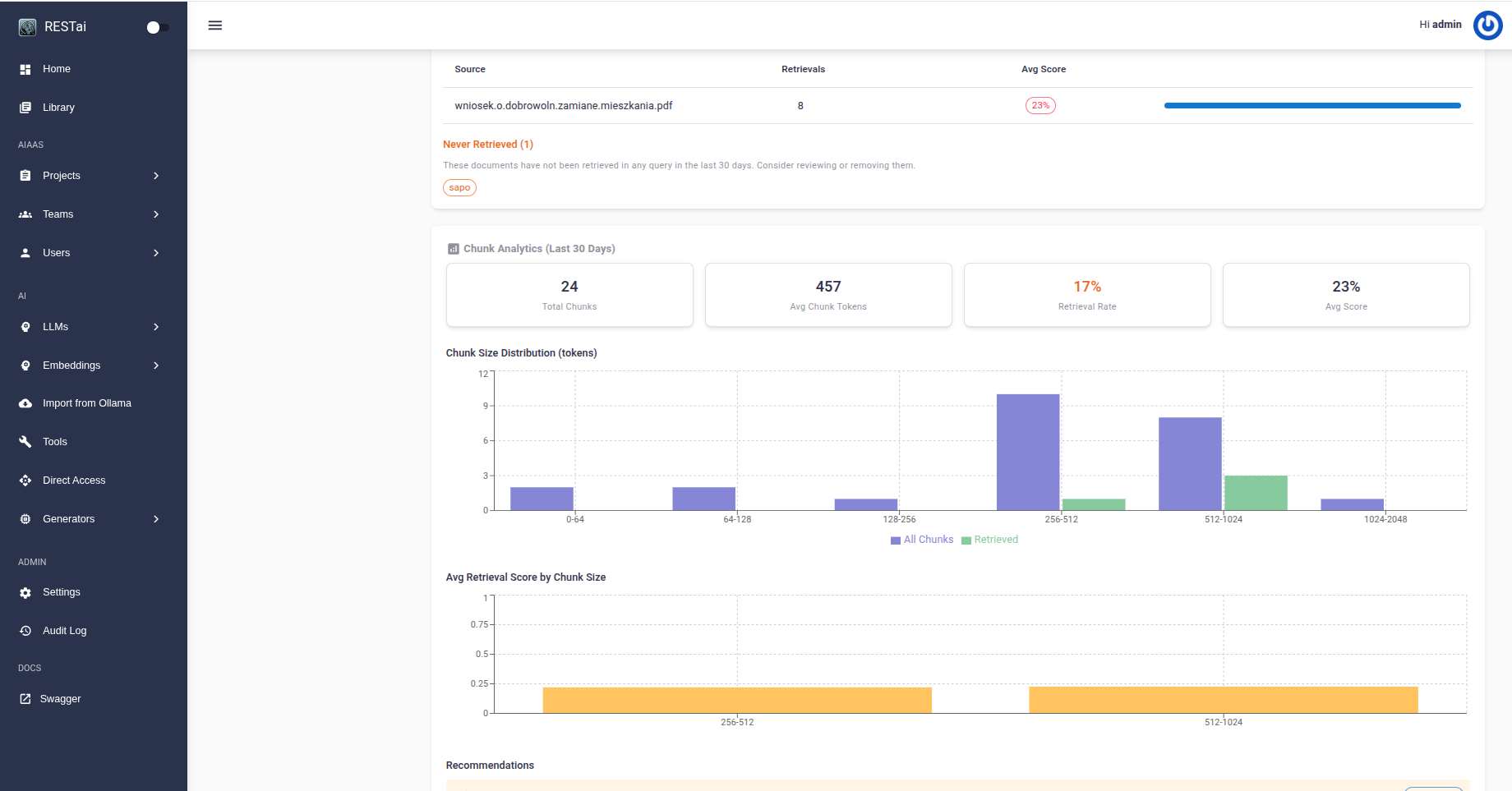

Upload documents and query them with LLM-powered retrieval. Multiple vector stores, ColBERT and LLM-based reranking, and natural language to SQL.

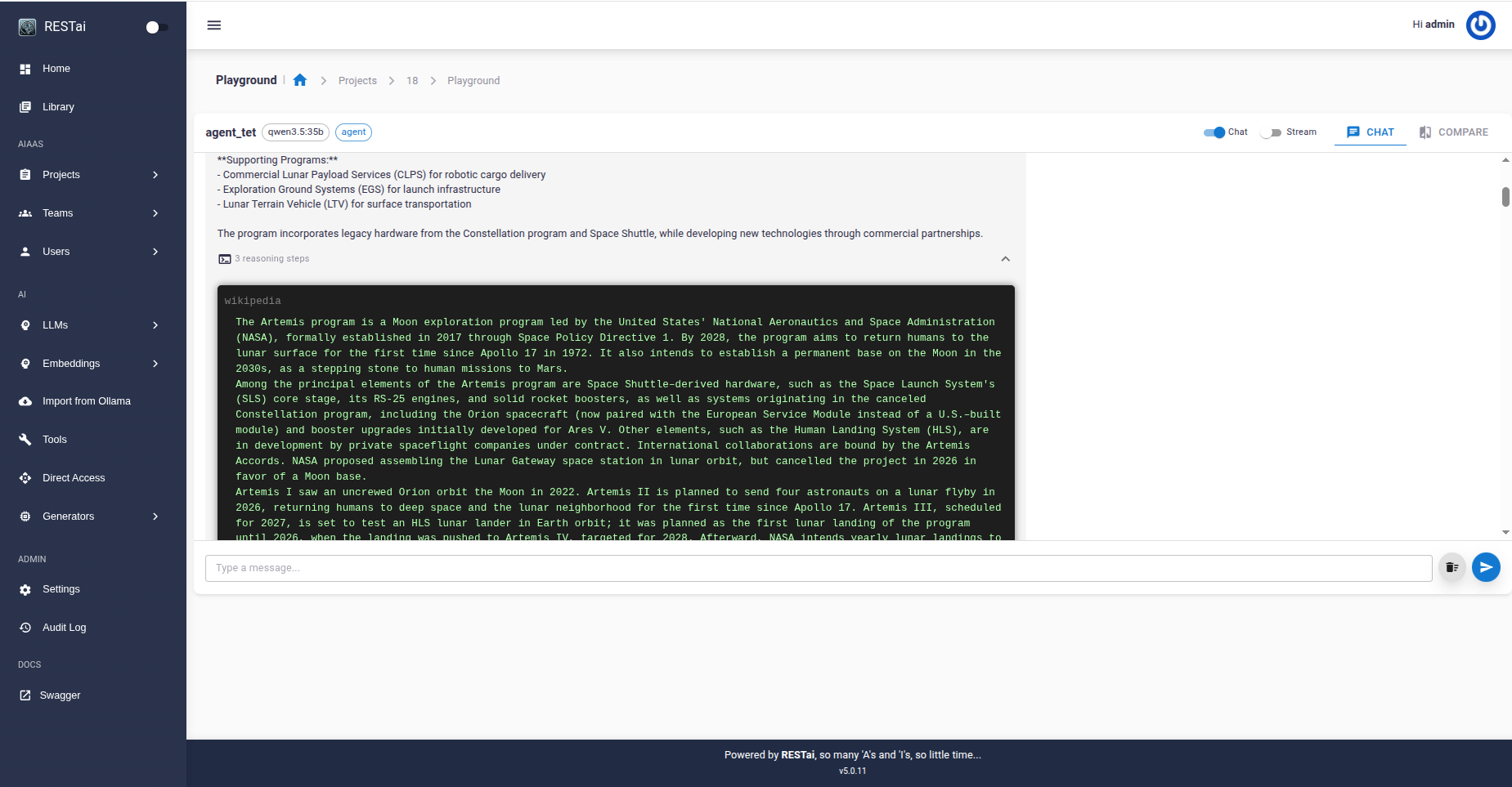

Zero-shot ReAct agents with built-in tools and MCP (Model Context Protocol) server support. Connect any MCP-compatible server via HTTP/SSE or stdio for unlimited tool access.

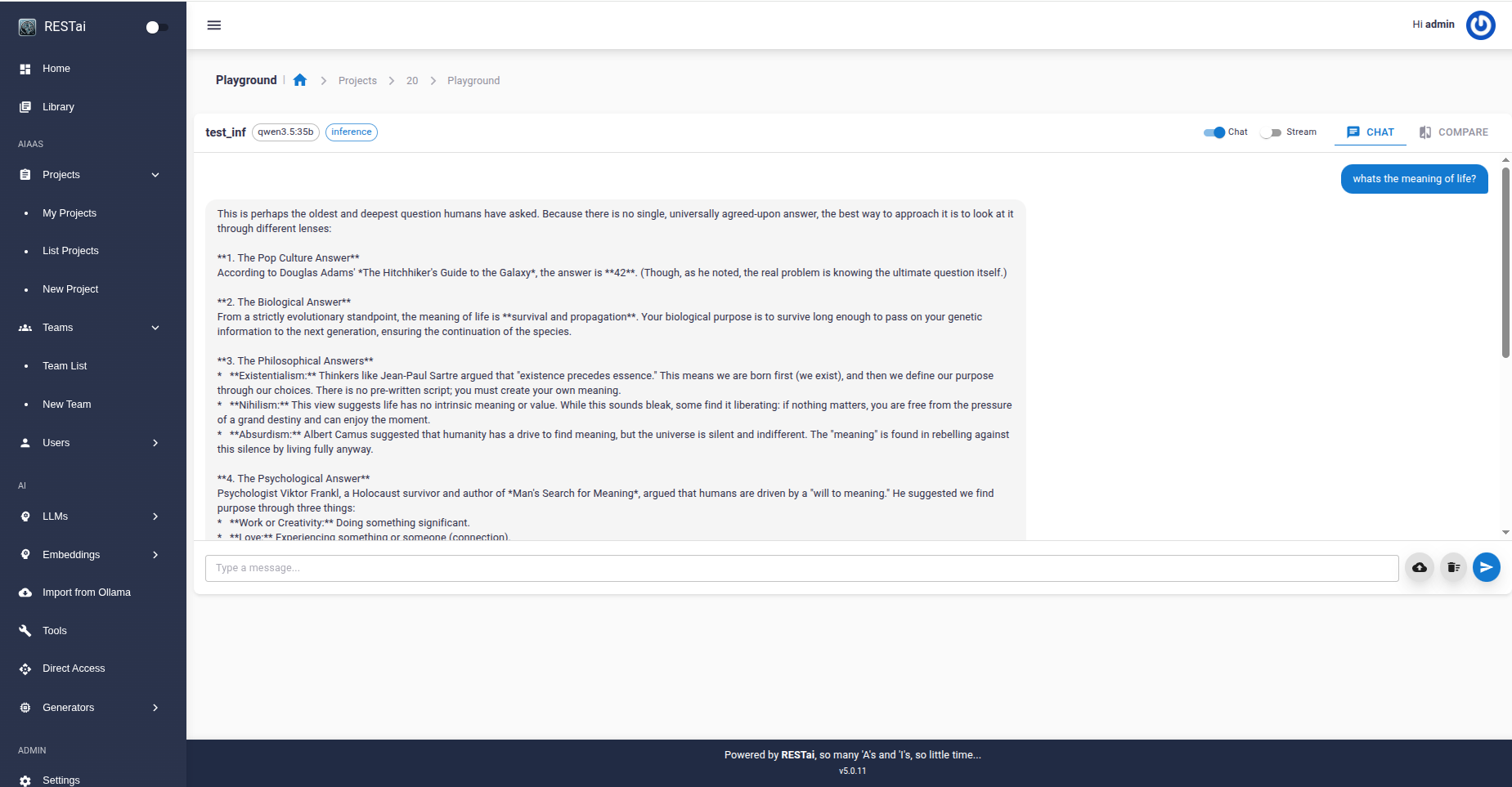

Create and manage AI projects with their own LLM, system prompt, tools, and configuration. Test instantly in the built-in chat playground with streaming responses and multimodal support.

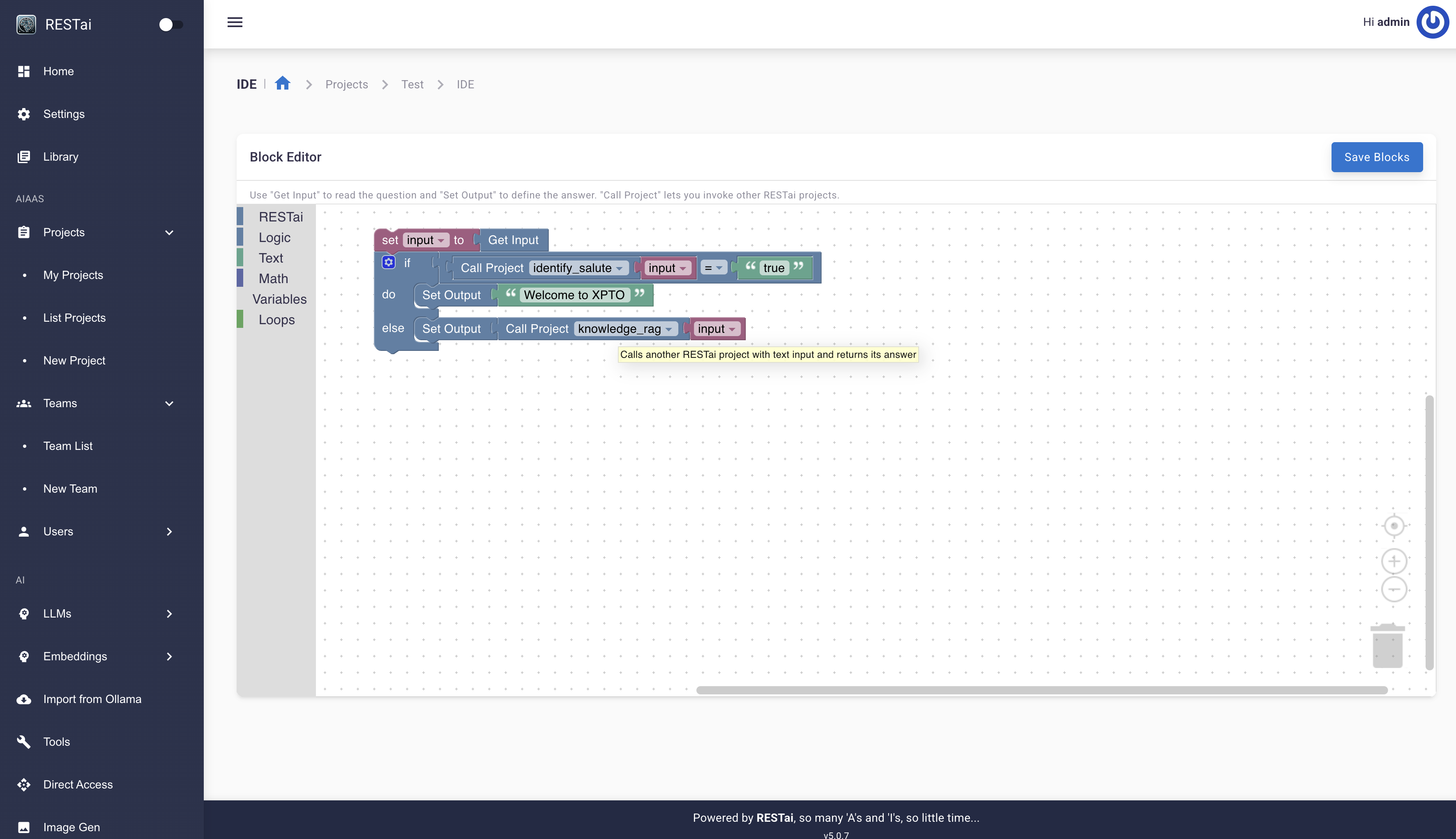

Build processing logic visually using a Blockly-based IDE — no LLM required. Drag-and-drop blocks to define how input is transformed into output. Use the "Call Project" block to compose AI pipelines.

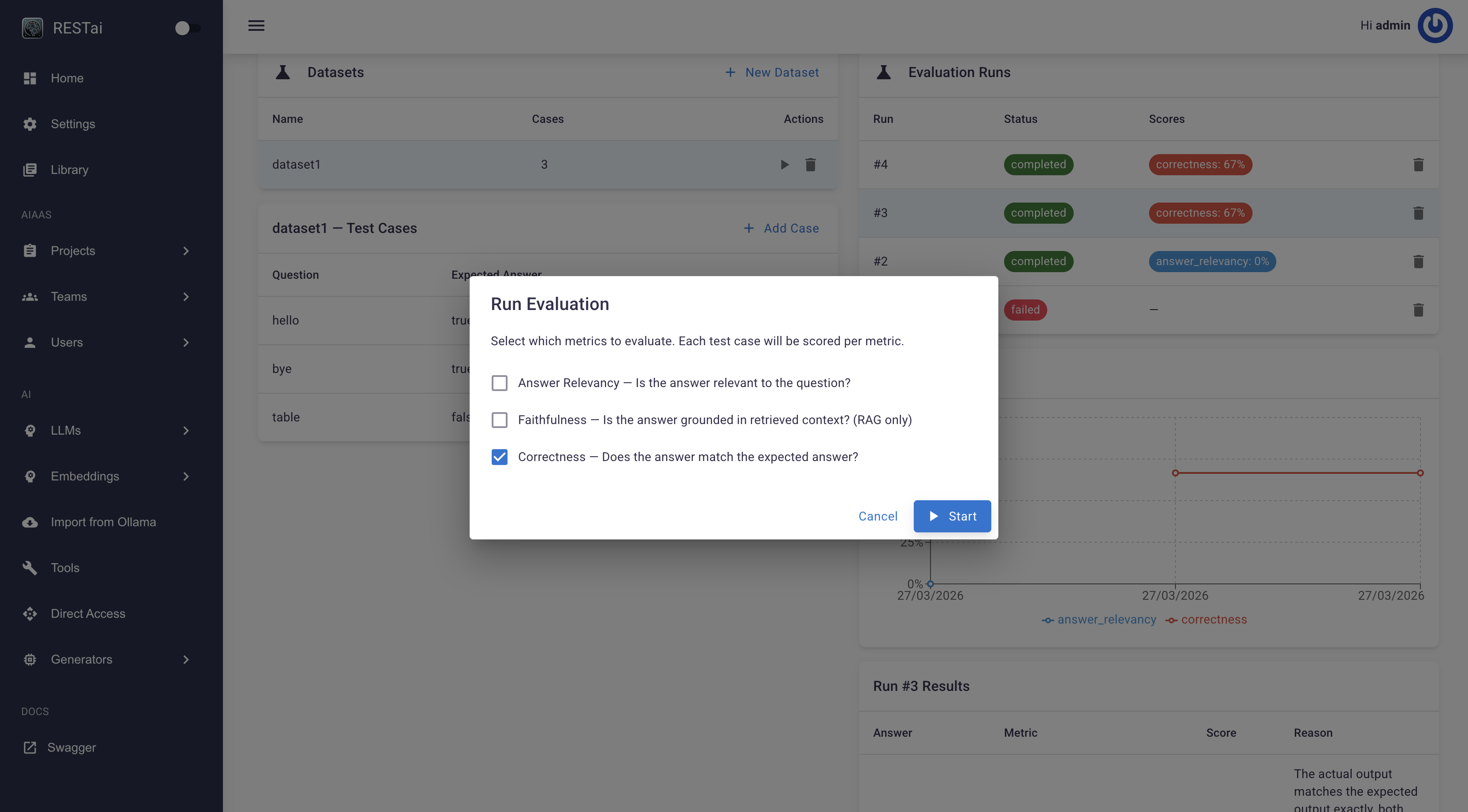

Built-in evaluation system to measure and track AI project quality over time. Create test datasets, run evaluations with multiple metrics, and visualize score trends.

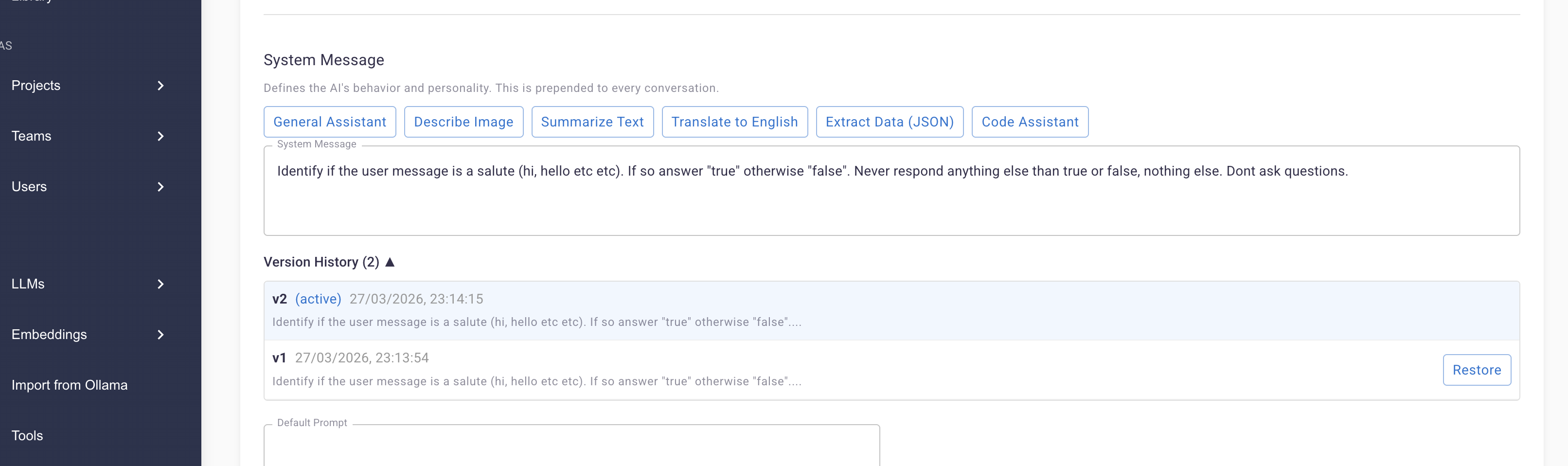

Every system prompt change is automatically versioned. Browse history, compare versions, and restore any previous prompt. Eval runs link to prompt versions for A/B comparison.

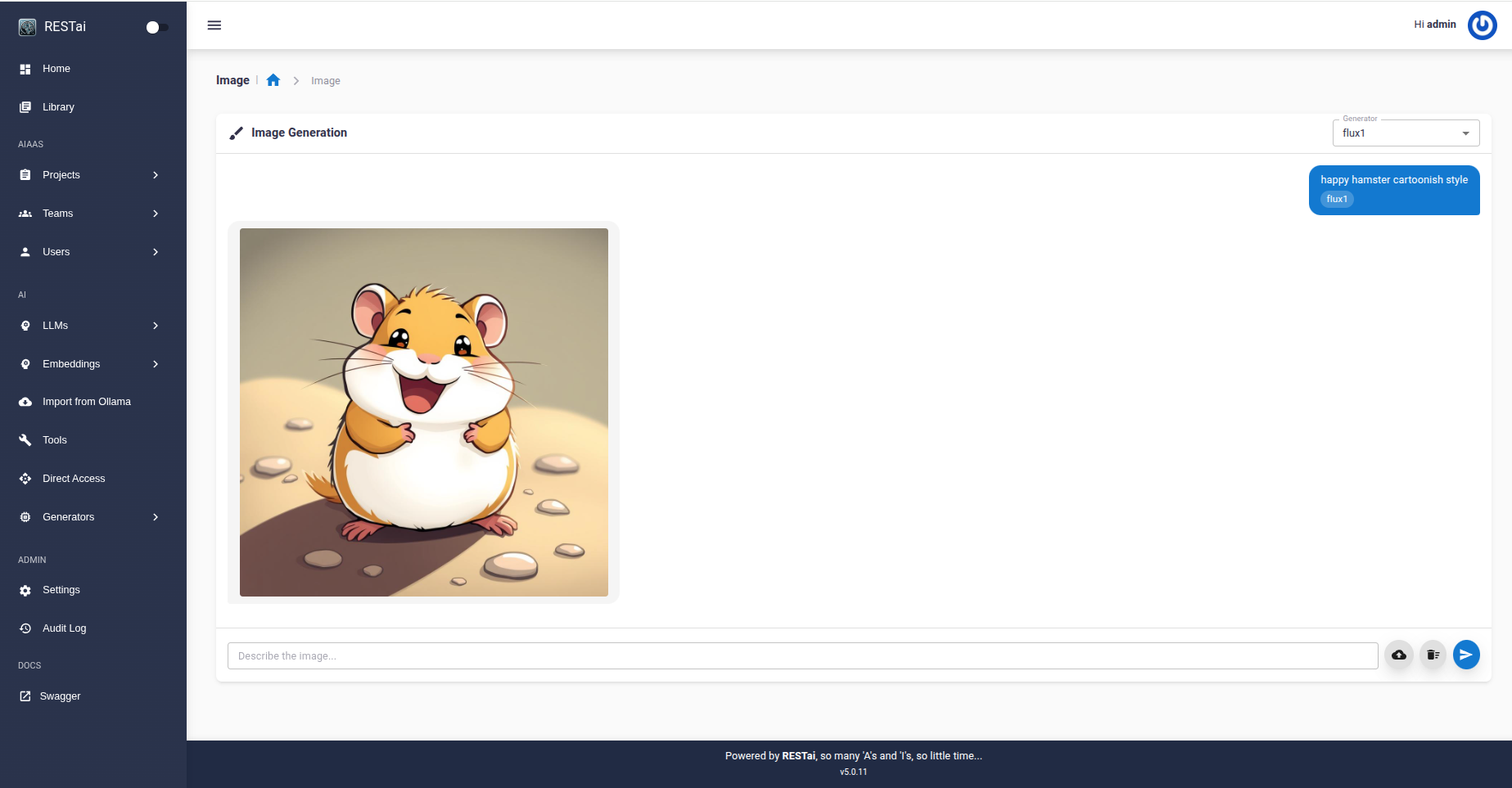

Local and remote image generators loaded dynamically. Supports Stable Diffusion, Flux, DALL-E, RMBG2, and more. Auto-detects NVIDIA GPUs with detailed hardware monitoring.

Multi-tenant with teams, RBAC, custom branding per team (white-labeling), TOTP 2FA, input/output guardrails, and a full audit log.

Automatically keep your RAG knowledge base up-to-date by syncing from external sources on a schedule. Configure per project with independent settings.

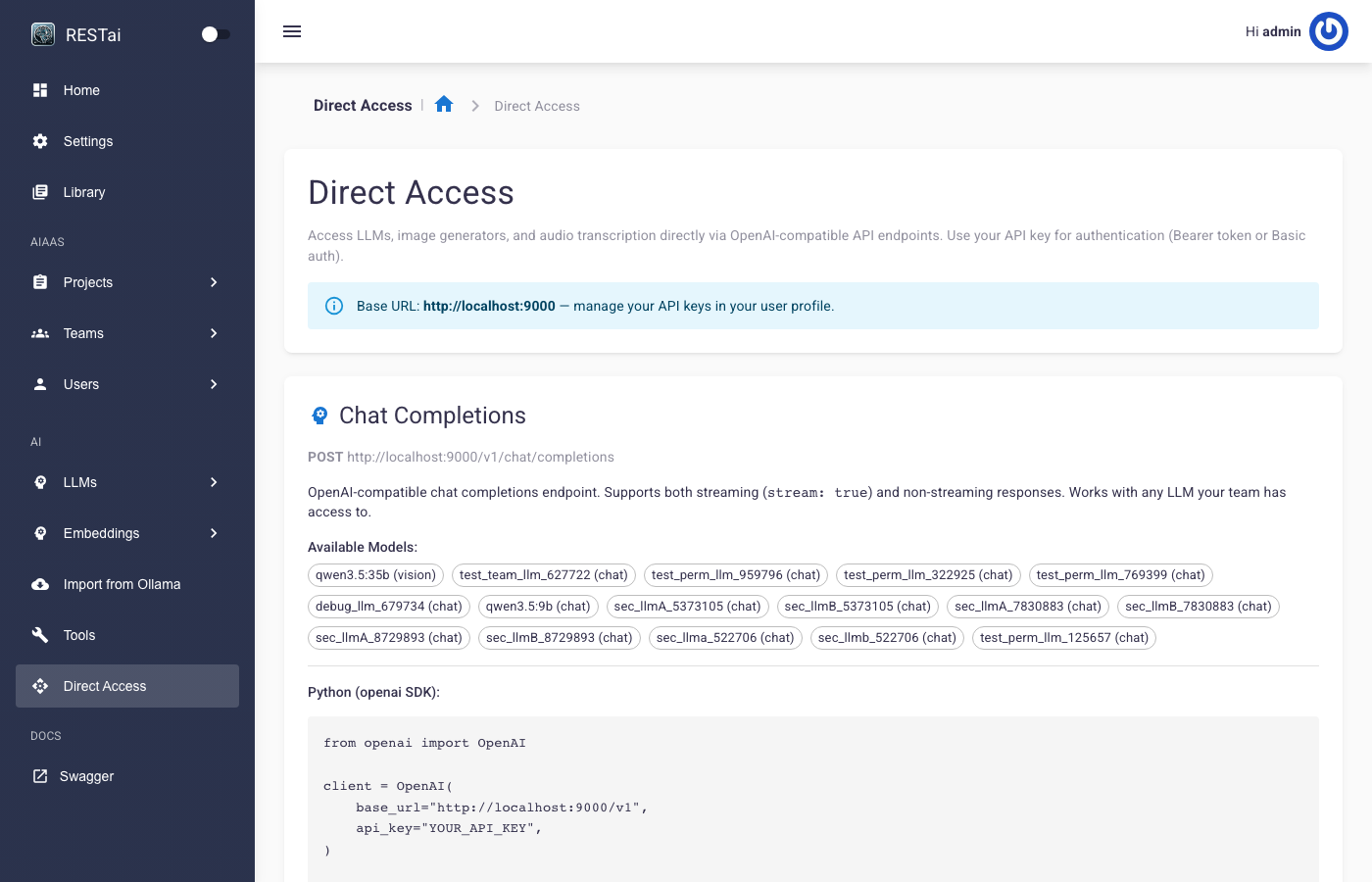

Use LLMs, image generators, and audio transcription directly via OpenAI-compatible endpoints — no project required. Team-level permissions control access, and all usage counts toward budgets.

POST /v1/chat/completions — Chat with any LLM (streaming supported)POST /v1/images/generations — Generate images via DALL-E, Flux, SD, etc.POST /v1/audio/transcriptions — Transcribe audio filesWorks with any OpenAI-compatible SDK — just point base_url to your RESTai instance.

Any LLM supported by LlamaIndex. Configurable context windows with automatic chat memory management.

# Install pip install restai-core # Setup database restai init restai migrate # Start server restai serve # Open http://localhost:9000/admin # Login: admin / admin

# Clone and install git clone https://github.com/apocas/restai cd restai && make install # Start dev server make dev # Open http://localhost:9000/admin # Login: admin / admin

With env file: restai serve -e .env -p 8080 -w 4

·

Docker: docker compose --env-file .env up --build